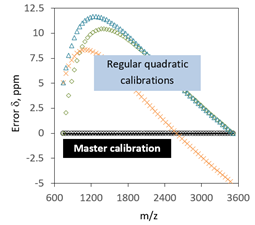

Mass errors generated by regular calibration equations and by the master calibration equation.

Invention Summary:

As instrumental parameters and a sample introduction may fluctuate or drift slightly, it is necessary to perform a calibration of the instrument before measuring. The widely used method is through establishing a calibration function using a single set of constants obtained by curve-fitting. However, obtaining the data for the constants is subject to random errors and the calibration equation can have deviations, resulting in errors in subsequently calculated new results.

Researcher at Rutgers University has developed a simple yet robust calibration method which could minimize or eliminate random errors in calibration. The method consists of obtaining the initial constants for a master calibration; comparing and validating constants of each subsequent new calibration relatively to the constants of master calibration; updating the master calibration equation each time with validated constants; and then applying the updated equation to the new measurement. This method has been validated on different analytical instruments and the error was significantly reduced.

Advantages:

- Minimizing random errors

- High accuracy

- Easy to implement to current calibration toolkit

Market Applications:

- Analytical instruments especially modern analytical measurement where accuracy is highly desired

Intellectual Property & Development Status: Patent Pending. Available for licensing and/or research collaboration.